Energy Efficient Cluster Usage

General Issue with Energy Efficiency

You are all aware of the rising energy costs and you are certainly careful to economize your energy consumption at home. But are you aware of the energy consumed by your computational jobs? An average compute job running on just a single node for one day may easily consume 10 kWh or even more.

That translates roughly to one of the following activities:

- toasting about 1330 slices of toast in a toaster

- continuously blow-drying your hair for about 10 hours

- actively working on a laptop for about 500 hours

- brewing 700 cups of coffee

Besides energy costs, even this single job alone will also contribute to climate change by adding around 5 kg of CO2 to the atmosphere (based on the average German power mix) which is roughly equivalent to driving a distance of 30 km by car.

You get the point: Please always keep this in mind when submitting tens or even hundreds of jobs to the queue, just like you do when switching on your electrical devices at home. Also, please always think carefully about how many resources your jobs really need and whether your application really benefits from allocating more cores for the jobs. Application speedup is often limited and does not scale linearly with the number of dedicated cores. But energy consumption usually does ...

Using as many resources as possible does not make a power user. Using them wisely does. If in doubt, just ask.

General recommendations

- Choose the most efficient algorithms for the given problem

- Run only necessary jobs: Please consider testing new setups and their output for validity prior to submitting a huge amount of similar jobs

- Start small: Run Your problem on a small amount of parallel entities (be it processes or threads) first

- Estimate the runtime of the parallel job as exactly as possible to increase efficiency of the scheduling of the whole system

- Use the proper tools for development: If You develop your own code, please use the proper tools for debugging and parallel performance analysis. More information is available on the bwHPC Wiki.

- A look at the job feedback can help you determine if you are using the cluster efficiently

Code development recommendations

The above recommendations will help using the Cluster resources efficiently. Regarding Software Development, power efficiency correlates obviously heavily with computing performance, but also with memory usage, i.e. amount of memory used, but also memory efficiency.

Here, we have gathered a few results based on other research:

- Use an efficient programming language such as Rust, C and C++ -- well any compiled language. Do not use any interpreted language like Perl or Python. Since Machine Learning is a hot topic, this deserves a few words: Any ML-Python code using Tensorflow or other libraries will make heavy usage of NumPy and other math packages, which will use C-based implementations. Please make sure, you use the provided Python-Modules, which are optimized to use Intel MKL and other mathematical libraries.

Interesting paper is: Rui Pereira, et al: "Energy efficiency across programming languages: how do energy, time, and memory relate?", SLE 2017: Proc. of the 10th ACM SIGPLAN Int. Conf. on SW Language Eng., Oct. 2017, pp. 256–267, doi:10.1145/3136014.3136031

- Analyse memory access patterns

- For small tight loops checking for Locks, use the

pauseinstruction.

Specific Scientific recommendations

Rework

Energy Efficiency on HPC Clusters

Energy consumption of data centers has been increasing continuously throughout the last decade. In 2020, the energy consumption of all data centers in Germany amounted to around 3 percent of the total electricity produced. Accompanying this large energy consumption are large-scale emissions of CO2 to the atmosphere and thus significant contributions to climate change. To illustrate this, an average compute job running on a single node for one day may easily consume 10 kWh or even more. That translates roughly to one of the following activities:

- toasting about 1330 slices of toast in a toaster

- continuously blow-drying your hair for about 10 hours

- actively working on a laptop for about 500 hours

- brewing 700 cups of coffee

Assuming that a typical bwHPC cluster has a few hundred compute nodes, this amounts to the energy consumption of a village for each cluster.

Using as many resources as possible does not make a power user. Using them wisely does. If in doubt, just ask.

Although a large amount of this energy consumption is an intrinsic requirement of running large HPC clusters (even when ist processors are idle, a cluster uses a lot of energy), efficient use of the available resources is important. In the following, a basic introduction to some of the most important aspects of energy-efficient HPC usage from a user perspective is given. References to further reading are given as both, the range of scientific projects as well as the differences between the HPC clusters are too large to warrant an exhaustive discussion of this topic here.

We can generally distinguish three tasks when optimizing for running HPC jobs efficiently.

- What do I want to do and why do I need an HPC Cluster for it?

- How many and which kind of hardware resources do I require for it?

- How do I optimize my code to use these resources most efficiently?

In the following, a short summary of how these questions should typically be addressed is given.

What do I want to do and why do I need an HPC Cluster for it?

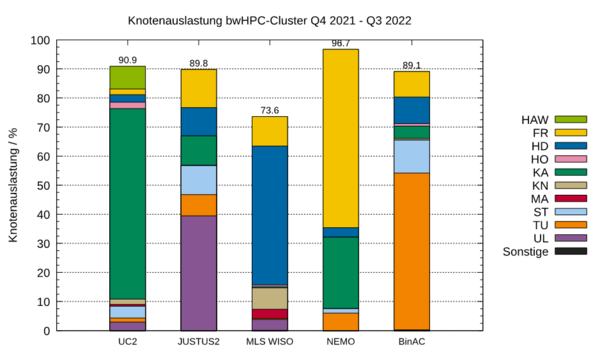

The bwHPC clusters are used to almost full capacity, as illustrated below, and running a job on an HPC node consumes a lot of energy, as shown above. Therefore, users are requested to run only necessary jobs.

Please consider testing new setups and their output for validity prior to submitting jobs that require lots of resources. This also includes projects where a lot of (smaller) similar jobs are submitted.

Make sure to double-check your jobs prior to the submission, having to discard the output data of an HPC project due to faulty input files is wasting a lot of computational resources.

Finally, identifying the specific resource requirements for a given job is important to allocate the optimal your compute job, and to decide if an HPC cluster is needed at all.

How many and which kind of hardware resources do I require for it?

Resource allocation is a crucial part when working on an HPC cluster.

As this is dependent on both the job as well as the specific cluster hardware and architecture available.

A small number of jobs and few resources

- Submit to the scheduler. No extended testing and resource scaling analysis are needed.

Medium-sized projects

- Run only necessary jobs: Please consider testing new setups and their output for validity prior to submitting a huge amount of similar jobs

- Start small: Run your problem on a small set of resources first.

- Use the proper tools for development: If you develop your own code, please use the proper tools for debugging and parallel performance analysis. More information is available on the bwHPC Wiki.

- A look at the job feedback can help you determine if you are using the cluster efficiently

Large projects

- Same approach as for medium-sized projects.

- Run a scaling analysis for your project with regard to how many resources work best. An introduction to how to do this can be found on the bwHPC wiki.

Many short jobs

- Handling via the scheduler is inefficient.

- Simple parallelization by hand is advisable. A basic introduction to how to parallelize your own code can be found on the bwHPC wiki.

How do I optimize my code to use these resources most efficiently?

The above recommendations will help use the cluster resources more efficiently. Regarding software development, power efficiency correlates obviously heavily with computing performance, but also with memory usage, i.e. the amount of memory used, but also memory efficiency.

Here, we have gathered a few results based on other research:

- Use an efficient programming language such as Rust, C, and C++ -- well any compiled language. Do not use any interpreted language like Perl or Python. Since Machine Learning is a hot topic, this deserves a few words: Any ML-Python code using Tensorflow or other libraries will make heavy usage of NumPy and other math packages, which will use C-based implementations. Please make sure, you use the provided Python modules, which are optimized to use Intel MKL and other mathematical libraries.

Further reading: Rui Pereira, et al: "Energy efficiency across programming languages: how do energy, time, and memory relate?", SLE 2017: Proc. of the 10th ACM SIGPLAN Int. Conf. on SW Language Eng., Oct. 2017, pp. 256–267, doi:10.1145/3136014.3136031

- Analyse memory access patterns

- For small tight loops checking for locks, use the

pauseinstruction.

Summary: General Recommendations

- Choose the most efficient algorithms for the given problem

- Run only necessary jobs: Please consider testing new setups and their output for validity prior to submitting a huge amount of similar jobs

- Start small: Run Your problem on a small number of parallel entities (be it processes or threads) first.

- Estimate the runtime of the parallel job as exactly as possible to increase the efficiency of the scheduling of the whole system

- Use the proper tools for development: If You develop your own code, please use the proper tools for debugging and parallel performance analysis. More information is available on the bwHPC Wiki.

- A look at the job feedback can help you determine if you are using the cluster efficiently