BwUniCluster3.0/Hardware and Architecture: Difference between revisions

| (19 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

= Architecture of bwUniCluster 3.0 = |

= Architecture of bwUniCluster 3.0 = |

||

The bwUniCluster 3.0 is a parallel computer with distributed memory. Each node of system consists of two Intel Xeon or AMD EPYC processors, local memory, |

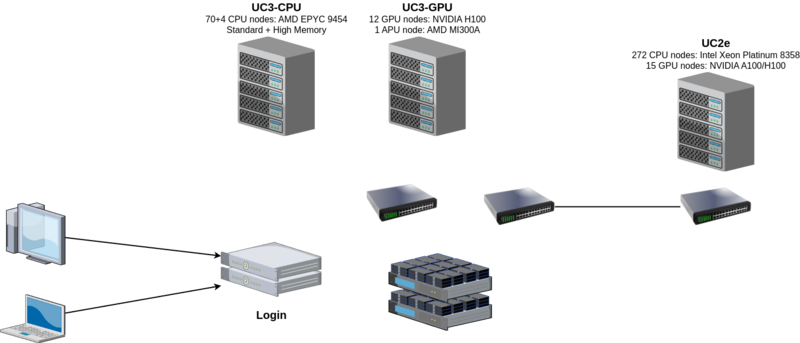

The bwUniCluster 3.0 is a parallel computer with distributed memory. Each node of system consists of two Intel Xeon or AMD EPYC processors, local memory, local storage, network adapters and optionally accelerators (NVIDIA A100 and H100, AMD Instinct MI300A). All nodes are connected by a fast InfiniBand interconnect. |

||

The parallel file system (Lustre) is connected to the InfiniBand switch of the compute cluster. This provides a fast and scalable parallel file system. |

The parallel file system (Lustre) is connected to the InfiniBand switch of the compute cluster. This provides a fast and scalable parallel file system. |

||

The operating system on each node is Red Hat Enterprise Linux (RHEL) 9.4. |

The operating system on each node is Red Hat Enterprise Linux (RHEL) 9.4. |

||

The individual nodes of the system act in different roles. From an end users point of view the different groups of nodes are login nodes |

The individual nodes of the system act in different roles. From an end users point of view the different groups of nodes are login nodes and compute nodes. File server nodes and administrative server nodes are not accessible by users. |

||

'''Login Nodes''' |

'''Login Nodes''' |

||

The login nodes are the only nodes directly accessible by end users. These nodes are used for interactive login, file management, program development, and interactive pre- and post-processing. |

The login nodes are the only nodes directly accessible by end users. These nodes are used for interactive login, file management, program development, and interactive pre- and post-processing. |

||

There are two nodes dedicated to this service, but they can all be reached from a single address. A DNS round-robin alias distributes login sessions to the login nodes. |

There are two nodes dedicated to this service, but they can all be reached from a single address. A DNS round-robin alias distributes login sessions to the login nodes. |

||

| Line 18: | Line 17: | ||

The majority of nodes are compute nodes which are managed by a batch system. Users submit their jobs to the SLURM batch system and a job is executed when the required resources become available (depending on its fair-share priority). |

The majority of nodes are compute nodes which are managed by a batch system. Users submit their jobs to the SLURM batch system and a job is executed when the required resources become available (depending on its fair-share priority). |

||

[[File:uc3.png|Optionen|Überschrift|800px]] |

|||

= Components of bwUniCluster 3.0 = |

|||

= Compute Resources = |

|||

== Login nodes == |

|||

[[BwUniCluster2.0/Login|Login]] |

|||

== Compute nodes == |

|||

<b>CPU nodes</b> |

|||

* Ice Lake: From UC2e |

|||

* Standard |

|||

* High Memory |

|||

<b>GPU nodes</b> |

|||

* NVIDIA GPU x4 |

|||

* AMD GPU x4 |

|||

* Ice Lake NVIDIA GPU x4 |

|||

{| class="wikitable" |

{| class="wikitable" |

||

|- |

|- |

||

| Line 27: | Line 42: | ||

! style="width:13%"| CPU nodes<br/>High Memory |

! style="width:13%"| CPU nodes<br/>High Memory |

||

! style="width:13%"| GPU nodes<br/>NVIDIA GPU x4 |

! style="width:13%"| GPU nodes<br/>NVIDIA GPU x4 |

||

! style="width: |

! style="width:13%"| GPU node<br/>AMD GPU x4 |

||

! style="width:13%"| GPU nodes<br/>Ice Lake<br/>NVIDIA GPU x4 |

! style="width:13%"| GPU nodes<br/>Ice Lake<br/>NVIDIA GPU x4 |

||

! style="width:13%"| Login nodes |

! style="width:13%"| Login nodes |

||

| Line 109: | Line 124: | ||

| 4x NVIDIA H100 |

| 4x NVIDIA H100 |

||

| 4x AMD Instinct MI300A |

| 4x AMD Instinct MI300A |

||

| 4x NVIDIA A100 / |

| 4x NVIDIA A100 / H100 |

||

| - |

| - |

||

|- |

|- |

||

| Line 130: | Line 145: | ||

| IB 1x NDR200 |

| IB 1x NDR200 |

||

|} |

|} |

||

Table 1: |

Table 1: Hardware overview and properties |

||

= File Systems = |

= File Systems = |

||

| Line 462: | Line 477: | ||

Such an archive can be read efficiently from a parallel file system since it is a single huge file. |

Such an archive can be read efficiently from a parallel file system since it is a single huge file. |

||

On a login node you can create such an archive with the following steps: |

On a login node you can create such an archive with the following steps: |

||

< |

<syntaxhighlight lang="bash"> |

||

# Create a workspace to store the archive |

# Create a workspace to store the archive |

||

[ab1234@uc2n997 ~]$ ws_allocate data-ssd 60 |

[ab1234@uc2n997 ~]$ ws_allocate data-ssd 60 |

||

# Create the archive from a local dataset folder (example) |

# Create the archive from a local dataset folder (example) |

||

[ab1234@uc2n997 ~]$ tar -cvzf $(ws_find data-ssd)/dataset.tgz dataset/ |

[ab1234@uc2n997 ~]$ tar -cvzf $(ws_find data-ssd)/dataset.tgz dataset/ |

||

</syntaxhighlight> |

|||

</source> |

|||

Inside a batch job extract the archive on $TMPDIR, read input data from $TMPDIR, store results on $TMPDIR |

Inside a batch job extract the archive on $TMPDIR, read input data from $TMPDIR, store results on $TMPDIR |

||

and save the results on a workspace: |

and save the results on a workspace: |

||

< |

<syntaxhighlight lang="bash"> |

||

#!/bin/bash |

#!/bin/bash |

||

# very simple example on how to use local $TMPDIR |

# very simple example on how to use local $TMPDIR |

||

| Line 485: | Line 500: | ||

# Before job completes save results on a workspace |

# Before job completes save results on a workspace |

||

rsync -av $TMPDIR/results $(ws_find data-ssd)/results-${SLURM_JOB_ID}/ |

rsync -av $TMPDIR/results $(ws_find data-ssd)/results-${SLURM_JOB_ID}/ |

||

</syntaxhighlight> |

|||

</source> |

|||

== LSDF Online Storage== |

== LSDF Online Storage== |

||

Revision as of 10:58, 4 December 2024

Architecture of bwUniCluster 3.0

The bwUniCluster 3.0 is a parallel computer with distributed memory. Each node of system consists of two Intel Xeon or AMD EPYC processors, local memory, local storage, network adapters and optionally accelerators (NVIDIA A100 and H100, AMD Instinct MI300A). All nodes are connected by a fast InfiniBand interconnect. The parallel file system (Lustre) is connected to the InfiniBand switch of the compute cluster. This provides a fast and scalable parallel file system.

The operating system on each node is Red Hat Enterprise Linux (RHEL) 9.4.

The individual nodes of the system act in different roles. From an end users point of view the different groups of nodes are login nodes and compute nodes. File server nodes and administrative server nodes are not accessible by users.

Login Nodes

The login nodes are the only nodes directly accessible by end users. These nodes are used for interactive login, file management, program development, and interactive pre- and post-processing. There are two nodes dedicated to this service, but they can all be reached from a single address. A DNS round-robin alias distributes login sessions to the login nodes.

Compute Node

The majority of nodes are compute nodes which are managed by a batch system. Users submit their jobs to the SLURM batch system and a job is executed when the required resources become available (depending on its fair-share priority).

Compute Resources

Login nodes

Compute nodes

CPU nodes

- Ice Lake: From UC2e

- Standard

- High Memory

GPU nodes

- NVIDIA GPU x4

- AMD GPU x4

- Ice Lake NVIDIA GPU x4

| CPU nodes Ice Lake |

CPU nodes Standard |

CPU nodes High Memory |

GPU nodes NVIDIA GPU x4 |

GPU node AMD GPU x4 |

GPU nodes Ice Lake NVIDIA GPU x4 |

Login nodes | |

|---|---|---|---|---|---|---|---|

| Availability in queues | cpu_il, dev_cpu_il

|

cpu, dev_cpu

|

highmem, dev_highmem

|

gpu_h100, dev_gpu_h100

|

gpu_mi300

|

gpu_a100_il / gpu_h100_il

|

- |

| Number of nodes | 272 | 70 | 4 | 12 | 1 | 15 | 2 |

| Processors | Intel Xeon Platinum 8358 | AMD EPYC 9454 | AMD EPYC 9454 | AMD EPYC 9454 | AMD Zen 4 | Intel Xeon Platinum 8358 | AMD EPYC 9454 |

| Number of sockets | 2 | 2 | 2 | 2 | 1 | 2 | 2 |

| Processor frequency (GHz) | 2.6 GHz | 2.75 GHz | 2.75 GHz | 2.75 GHz | 3.7 GHz | 2.6 GHz | 2.75 GHz |

| Total number of cores | 64 | 96 | 96 | 96 | 24 | 64 | 96 |

| Main memory | 256 GB | 384 GB | 2.3 TB | 768 GB | 128 GB HBM3 | 512 GB | 384 GB |

| Local SSD | 1.8 TB NVMe | 3.84 TB NVMe | 15.36 TB NVMe | 15.36 TB NVMe | 7.68 TB NVMe | 6.4 TB NVMe | 7.68 TB SATA SSD |

| Accelerators | - | - | - | 4x NVIDIA H100 | 4x AMD Instinct MI300A | 4x NVIDIA A100 / H100 | - |

| Accelerator memory | - | - | - | 94 GB | APU | 80 GB / 94 GB | - |

| Interconnect | IB HDR200 | IB 2x NDR200 | IB 2x NDR200 | IB 4x NDR200 | IB 2x NDR200 | IB HDR200 | IB 1x NDR200 |

Table 1: Hardware overview and properties

File Systems

On bwUniCluster 2.0 the parallel file system Lustre is used for most globally visible user data. Lustre is open source and Lustre solutions and support are available from different vendors. Nowadays, most of the biggest HPC systems are using Lustre. An initial home directory on a Lustre file system is created automatically after account activation, and the environment variable $HOME holds its name. Users can create so-called workspaces on another Lustre file system for non-permanent data with temporary lifetime. There is another workspace file system based on flash storage for special requirements available.

Within a batch job further file systems are available:

- The directory $TMPDIR is only available and visible on the local node. It is located on fast SSD storage devices.

- On request a parallel on-demand file system (BeeOND) is created which uses the SSDs of the nodes which were allocated to the batch job.

- On request the external LSDF Online Storage is mounted on the nodes which were allocated to the batch job. This file system is based on the parallel file system Spectrum Scale.

Some of the characteristics of the file systems are shown in Table 2.

| Property | $TMPDIR | BeeOND | $HOME | Workspace | Workspace on flash |

|---|---|---|---|---|---|

| Visibility | local node | nodes of batch job | global | global | global |

| Lifetime | batch job runtime | batch job runtime | permanent | max. 240 days | max. 240 days |

| Disk space | 960 GB - 6.4 TB details see table 1 |

n*750 GB | 1.2 PiB | 4.1 PiB | 236 TiB |

| Capacity Quotas | no | no | yes 1 TiB per user, for MA users 256 GiB also per organization |

yes 40 TiB per user |

yes 1 TiB per user |

| Inode Quotas | no | no | yes 10 million per user for MA users 2.5 million |

yes 30 million per user |

yes 5 million per user |

| Backup | no | no | yes | no | no |

| Read perf./node | 500 MB/s - 6 GB/s depends on type of local SSD / job queue: 520 MB/s @ single / multiple 800 MB/s @ multiple_e 6600 MB/s @ fat 6500 MB/s @ gpu_4 6500 MB/s @ gpu_8 |

400 MB/s - 500 MB/s depends on type of local SSDs / job queue: 500 MB/s @ multiple 400 MB/s @ multiple_e |

1 GB/s | 1 GB/s | 1 GB/s |

| Write perf./node | 500 MB/s - 4 GB/s depends on type of local SSD / job queue: 500 MB/s @ single / multiple 650 MB/s @ multiple_e 2900 MB/s @ fat 2090 MB/s @ gpu_4 4060 MB/s @ gpu_8 |

250 MB/s - 350 MB/s depends on type of local SSDs / job queue: 350 MB/s @ multiple 250 MB/s @ multiple_e |

1 GB/s | 1 GB/s | 1 GB/s |

| Total read perf. | n*500-6000 MB/s | n*400-500 MB/s | 18 GB/s | 54 GB/s | 45 GB/s |

| Total write perf. | n*500-4000 MB/s | n*250-350 MB/s | 18 GB/s | 54 GB/s | 38 GB/s |

global: all nodes of UniCluster access the same file system; local: each node has its own file system; permanent: files are stored permanently; batch job: files are removed at end of the batch job.

Table 2: Properties of the file systems

Selecting the appropriate file system

In general, you should separate your data and store it on the appropriate file system. Permanently needed data like software or important results should be stored below $HOME but capacity restrictions (quotas) apply. In case you accidentally deleted data on $HOME there is a chance that we can restore it from backup. Permanent data which is not needed for months or exceeds the capacity restrictions should be sent to the LSDF Online Storage or to the archive and deleted from the file systems. Temporary data which is only needed on a single node and which does not exceed the disk space shown in the table above should be stored below $TMPDIR. Data which is read many times on a single node, e.g. if you are doing AI training, should be copied to $TMPDIR and read from there. Temporary data which is used from many nodes of your batch job and which is only needed during job runtime should be stored on a parallel on-demand file system. Temporary data which can be recomputed or which is the result of one job and input for another job should be stored in workspaces. The lifetime of data in workspaces is limited and depends on the lifetime of the workspace which can be several months.

For further details please check the chapters below.

$HOME

The home directories of bwUniCluster 2.0 (uc2) users are located in the parallel file system Lustre. You have access to your home directory from all nodes of uc2. A regular backup of these directories to tape archive is done automatically. The directory $HOME is used to hold those files that are permanently used like source codes, configuration files, executable programs etc.

On uc2 there is a default user quota limit of 1 TiB and 10 million inodes (files and directories) per user. For users of University of Mannheim the limit is 256 GiB and 2.5 million inodes. You can check your current usage and limits with the command

$ lfs quota -uh $(whoami) $HOME

In addition to the user limit there is a limit of your organization (e.g. university) which depends on the financial share. This limit is enforced with so-called Lustre project quotas. You can show the current usage and limits of your organization with the following command:

lfs quota -ph $(grep $(echo $HOME | sed -e "s|/[^/]*/[^/]*$||") /pfs/data5/project_ids.txt | cut -f 1 -d\ ) $HOME

Workspaces

On uc2 workspaces can be used to store large non-permanent data sets, e.g. restart files or output data that has to be post-processed. The file system used for workspaces is also the parallel file system Lustre. This file system is especially designed for parallel access and for a high throughput to large files. It is able to provide high data transfer rates of up to 54 GB/s write and read performance when data access is parallel.

On uc2 there is a default user quota limit of 40 TiB and 30 million inodes (files and directories) per user. You can chek your current usage and limits with the command

$ lfs quota -uh $(whoami) /pfs/work7

Note that the quotas include data and inodes for all of your workspaces and all of your expired workspaces (as long as they are not yet completely removed).

Workspaces have a lifetime and the data on a workspace expires as a whole after a fixed period. The maximum lifetime of a workspace on uc2 is 60 days, but it can be renewed at the end of that period 3 times to a total maximum of 240 days after workspace generation.

Creating, deleting, finding, extending and sharing workspaces is explained on the workspace page.

Reminder for workspace deletion

Normally you will get an email every day starting 7 days before a workspace expires. You can send yourself a calender entry which reminds you when a workspace will be automatically deleted:

$ ws_send_ical <workspace> <email>

Restoring expired Workspaces

At expiration time your workspace will be moved to a special, hidden directory. On uc2 expired workspaces are currently kept for 30 days. During this time you can still restore your data into a valid workspace. The same is true for released workspaces but they are only kept until the next night. In order to restore an expired workspace, use

ws_restore -l

to get a list of your expired workspaces, and then restore them into an existing, active workspace (here with name my_restored):

ws_restore <full_name_of_expired_workspace> my_restored

NOTE: The expired workspace has to be specified using the full name as listed by ws_restore -l, including username prefix and timestamp suffix (otherwise, it cannot be uniquely identified).

The target workspace, on the other hand, must be given with just its short name as listed by ws_list, without the username prefix.

NOTE: ws_restore can only work on the same filesystem. So you have to ensure that the new workspace allocated with ws_allocate is placed on the same filesystem as the expired workspace. Therefore, you can use -F <filesystem> flag if needed.

Linking workspaces in Home

It might be valuable to have links to personal workspaces within a certain directory, e.g. below the user home directory. The command

ws_register <DIR>

will create and manage links to all personal workspaces within in the directory <DIR>. Calling this command will do the following:

- The directory <DIR> will be created if necessary

- Links to all personal workspaces will be managed:

- Creates links to all available workspaces if not already present

- Removes links to released or expired workspaces

Improving Performance on $HOME and workspaces

The following recommendations might help to improve throughput and metadata performance on Lustre filesystems.

Improving Throughput Performance

Depending on your application some adaptations might be necessary if you want to reach the full bandwidth of the filesystems. Parallel filesystems typically stripe files over storage subsystems, i.e. large files are separated into stripes and distributed to different storage subsystems. In Lustre, the size of these stripes (sometimes also mentioned as chunks) is called stripe size and the number of used storage subsystems is called stripe count.

When you are designing your application you should consider that the performance of parallel filesystems is generally better if data is transferred in large blocks and stored in few large files. In more detail, to increase throughput performance of a parallel application following aspects should be considered:

- collect large chunks of data and write them sequentially at once,

- to exploit complete filesystem bandwidth use several clients,

- avoid competitive file access by different tasks or use blocks with boundaries at stripe size (default is 1MB),

- if files are small enough for the SSDs and are only used by one process store them on $TMPDIR.

With previous Lustre versions adapting the Lustre stripe count was the most important optimization. However, for the workspaces of uc2 the new Lustre feature Progressive File Layouts has been used to define file striping parameters. This means that the stripe count is adapted if the file size is growing. In normal cases users no longer need to adapt file striping parameters in case they have very huge files or in order to reach better performance.

If you know what you are doing you can still change striping parameters, e.g. the stripe count, of a directory and of newly created files. New files and directories inherit the stripe count from the parent directory. E.g. if you want to enhance throughput on a single very large file which is created in the directory $HOME/my_output_dir you can use the command

$ lfs setstripe -c-1 $HOME/my_output_dir

to change the stripe count to -1 which means that all storage subsystems of the file system are used to store that file. If you change the stripe count of a directory the stripe count of existing files inside this directory is not changed. If you want to change the stripe count of existing files, change the stripe count of the parent directory, copy the files to new files, remove the old files and move the new files back to the old name. In order to check the stripe setting of the file my_file use

$ lfs getstripe my_file

Also note that changes on the striping parameters (e.g. stripe count) are not saved in the backup, i.e. if directories have to be recreated this information is lost and the default stripe count will be used. Therefore, you should annotate for which directories you made changes to the striping parameters so that you can repeat these changes if required.

Improving Metadata Performance

Metadata performance on parallel file systems is usually not as good as with local filesystems. In addition, it is usually not scalable, i.e. a limited resource. Therefore, you should omit metadata operations whenever possible. For example, it is much better to have few large files than lots of small files. In more detail, to increase metadata performance of a parallel application following aspects should be considered:

- avoid creating many small files,

- avoid competitive directory access, e.g. by creating files in separate subdirectories for each task,

- if many small files are only used within a batch job and accessed by one process store them on $TMPDIR,

- change the default colorization setting of the command ls (see below).

On modern Linux systems, the GNU ls command often uses colorization by default to visually highlight the file type; this is especially true if the command is run within a terminal session. This is because the default shell profile initializations usually contain an alias directive similar to the following for the ls command:

$ alias ls="ls --color=tty"

However, running the ls command in this way for files on a Lustre file system requires a stat() call to be used to determine the file type. This can result in a performance overhead, because the stat() call always needs to determine the size of a file, and that in turn means that the client node must query the object size of all the backing objects that make up a file. As a result of the default colorization setting, running a simple ls command on a Lustre file system often takes as much time as running the ls command with the -l option (the same is true if the -F, -p, or the -classify option, or any other option that requires information from a stat() call, is used). To avoid this performance overhead when using ls commands, add an alias directive similar to the following to your shell startup script:

$ alias ls="ls --color=never"

Workspaces on flash storage

There is another workspace file system for special requirements available. The file system is called full flash pfs and is based on the parallel file system Lustre.

Advantages of this file system

- All storage devices are based on flash (no hard disks) with low access times. Hence performance is better compared to other parallel file systems for read and write access with small blocks and with small files, i.e. IOPS rates are improved.

- The file system is mounted on bwUniCluster 2.0 and HoreKa, i.e. it can be used to share data between these clusters.

Access restrictions

Only HoreKa users or KIT users of bwUniCluster 2.0 can use this file system.

Using the file system

As KIT or HoreKa user you can use the file system in the same way as a normal workspace. You just have to specify the name of the flash-based workspace file system using the option -F to all the commands that manage workspaces. On bwUniCluster 2.0 it is called ffuc, on HoreKa it is ffhk. For example, to create a workspace with name myws and a lifetime of 60 days on bwUniCluster 2.0 execute:

ws_allocate -F ffuc myws 60

If you want to use the full flash pfs on bwUniCluster 2.0 and HoreKa at the same time, please note that you only have to manage a particular workspace on one of the clusters since the name of the workspace directory is different. However, the path to each workspace is visible and can be used on both clusters.

Other features are similar to normal workspaces. For example, we are able to restore expired workspaces for few weeks and you have to open a ticket to request the restore. There are quota limits with a default limit of 1 TiB capacity and 5 millions inodes per user. You can check your current usage with

lfs quota -uh $(whoami) /pfs/work8

$TMPDIR

The environment variable $TMPDIR contains the name of a directory which is located on the local SSD of each node. This means that different tasks of a parallel application use different directories when they do not utilize the same node. Although $TMPDIR points to the same path name for different nodes of a batch job, the physical location and the content of this directory path on these nodes is different.

This directory should be used for temporary files being accessed from the local node during job runtime. It should also be used if you read the same data many times from a single node, e.g. if you are doing AI training. In this case you should copy the data at the beginning of your batch job to $TMPDIR and read the data from there, see usage example below.

The $TMPDIR directory is located on extremely fast local SSD storage devices. This means that performance on small files is much better than on the parallel file systems. The capacity of the local SSDs for each node type is different and can be checked in Table 1 above. The capacity of $TMPDIR is at least 800 GB.

Each time a batch job is started, a subdirectory is created on the SSD of each node and assigned to the job. $TMPDIR is set to the name of the subdirectory and this name contains the job ID so that it is unique for each job. At the end of the job the subdirectory is removed.

On login nodes $TMPDIR also points to a fast directory on a local SSD disk but this directory is not unique. It is recommended to create your own unique subdirectory on these nodes. This directory should be used for the installation of software packages. This means that the software package to be installed should be unpacked, compiled and linked in a subdirectory of $TMPDIR. The real installation of the package (e.g. make install) should be made into the $HOME folder.

|

Note that you should not use /tmp or /scratch! Please use $TMPDIR instead. |

Usage example for $TMPDIR

We will provide an example for using $TMPDIR and describe efficient data transfer to and from $TMPDIR.

If you have a data set with many files which is frequently used by batch jobs you should create a compressed archive on a workspace. This archive can be extracted on $TMPDIR inside your batch jobs. Such an archive can be read efficiently from a parallel file system since it is a single huge file. On a login node you can create such an archive with the following steps:

# Create a workspace to store the archive

[ab1234@uc2n997 ~]$ ws_allocate data-ssd 60

# Create the archive from a local dataset folder (example)

[ab1234@uc2n997 ~]$ tar -cvzf $(ws_find data-ssd)/dataset.tgz dataset/

Inside a batch job extract the archive on $TMPDIR, read input data from $TMPDIR, store results on $TMPDIR and save the results on a workspace:

#!/bin/bash

# very simple example on how to use local $TMPDIR

#SBATCH -N 1

#SBATCH -t 24:00:00

# Extract compressed input dataset on local SSD

tar -C $TMPDIR/ -xvzf $(ws_find data-ssd)/dataset.tgz

# The application reads data from dataset on $TMPDIR and writes results to $TMPDIR

myapp -input $TMPDIR/dataset/myinput.csv -outputdir $TMPDIR/results

# Before job completes save results on a workspace

rsync -av $TMPDIR/results $(ws_find data-ssd)/results-${SLURM_JOB_ID}/

LSDF Online Storage

In some cases it is useful to have access to the LSDF Online Storage on the HPC-Clusters also. Therefore the LSDF Online Storage is mounted on the Login- and Datamover-Nodes. Furthermore it can be used on the compute nodes during the job runtime with the constraint flag "LSDF" (Slurm common features ). There is also an example about the LSDF batch usage: Slurm LSDF example .

#!/bin/bash #SBATCH ... #SBATCH --constraint=LSDF

For the usage of the LSDF Online Storage the following environment variables are available: $LSDF, $LSDFPROJECTS, $LSDFHOME.

Please request storage projects in the LSDF Online Storage seperately:

LSDF Storage Request.

BeeOND (BeeGFS On-Demand)

Users of the UniCluster have possibility to request a private BeeOND (on-demand BeeGFS) parallel filesystem for each job. The file system is created during job startup and purged after your job.

- IMPORTANT:

- All data on the private filesystem will be deleted after your job. Make sure you have copied your data back to the global filesystem (within job), e.g., $HOME or any workspace.

BeeOND/BeeGFS can be used like any other parallel file system. Tools like cp or rsync can be used to copy data in and out.

For detailed usage see here: Request on-demand file system

Backup and Archiving

There are regular backups of all data of the home directories,whereas ACLs and extended attributes will not be backuped.

Please open a ticket if you need backuped data.